On this page ...

The Freon Interpreter Framework

During Freon’s generation step, several files are produced that together form the foundation of an interpreter.

As a language engineer, the only file that you need to change is a file named <<LanguageName>>Interpreter.ts, where <<LanguageName>> refers, of course, to the name of your DSL. In our Expressions example it is called ExpressionsInterpreter.ts.

The class that is defined in this file inherits from the class <<LanguageName>>InterpreterBase,

which provides one evaluation function for every concept defined in your language. By overriding

these functions, you define the value associated with each AST node.. The following is an example of an evaluation function

in ExpressionsInterpreterBase.ts.

// Expressions/src/freon/interpreter/gen/ExpressionsInterpreterBase.ts#L35-L37

All evaluation functions are similar. The first parameter is the node for which a value needs to be determined.

The second parameter, of type InterpreterContext, provides

the context in

which the expression should be evaluated.

This context is used to store values of constants, variables, etc. so they are available for

use in the function.

Initially, the base class functions throw an exception when called, as shown above, but when overridden,

they should result in an object of type RtObject (short for “runtime object”),

from Freon’s Runtime Object Library.

Here is an example of how evalNumberLiteralExpression is overridden:

// Expressions/src/custom/interpreter/ExpressionsInterpreter.ts#L36-L38

override evalNumberLiteralExpression(node: NumberLiteralExpression, ctx: InterpreterContext): RtObject {

return new RtNumber(node.value);

}Freon’s Runtime Object Library

Each evaluation result in Freon is represented as an RtObject (or subclass),

ensuring a strict separation between the model (M1) and runtime (M0) levels.

There can, of course, be references from RtObjects to instances in the model, e.g. for traceability.

Freon provides a standard set of runtime classes ready for immediate use. These include:

RtNumber: Represents numeric values.RtString: Represents strings.RtBoolean: Represents boolean values.RtArray: Represents arrays.RtError: Represents errors.

You can extend these foundational classes to create domain-specific runtime objects.

- The language definition, defining which concepts are available. Often called the M2 level. In Freon this is represented by the language definition in the .ast files. In Java this would be the Java Language Definition.

- The model, which contains instances of the language concepts, called the M1 level. In Freon this is what you edit in a Freon application. In Java this would be a Java program consisting of Java classes.

- The runtime level, values resulting from executing or interpreting the model, called the M0 level. In Freon this is the result of the interpreter running, or it would be the result of executing code generated from the model (M1) level. In Java, this corresponds to the execution of a Java program.

Interpreter Context

Every AST node is evaluated within a certain context, represented by the ctx: InterpreterContext parameter.

For instance, if in the model we refer to a parameter in the body of a function, we need to know the value of the parameter to be able to calculate the value of the body. This is achieved through the context as follows:

// Expressions/src/custom/interpreter/ExpressionsInterpreter.ts#L56-L65

override evalFunctionCallExpression(node: FunctionCallExpression, ctx: InterpreterContext): RtObject {

const calledFunction = node.$calledFunction;

const functionContext = new InterpreterContext(ctx);

node.arguments.forEach((arg, index) => {

const argumentValue = main.evaluate(arg, ctx);

// Add the parameter to the context with the value of the evaluated argument

functionContext.set(calledFunction.parameters[index], argumentValue);

});

return main.evaluate(calledFunction, functionContext);

}The evaluation of the function call expression has two parts.

In the first part a new InterpreterContext is created with the original context as its parent.

This ensures that all values in the original context remain accessible.

Then all the arguments of the function call are evaluated and their value is stored in the context with the corresponding parameter as its key.

The function is then evaluated using main.evaluate(calledFunction, functionContext), with the new context as its parameter.

If we come across a ParameterRef inside the evaluation of the function body,

this evaluation can simply lookup the value of the parameter:

// Expressions/src/custom/interpreter/ExpressionsInterpreter.ts#L71-L73

override evalParameterRef(node: ParameterRef, ctx: InterpreterContext): RtObject {

return ctx.find(node.$parameter);

}Note that the value of the parameter lookup will be different for different calls to the function. Which is exactly what we need.

Running the Interpreter

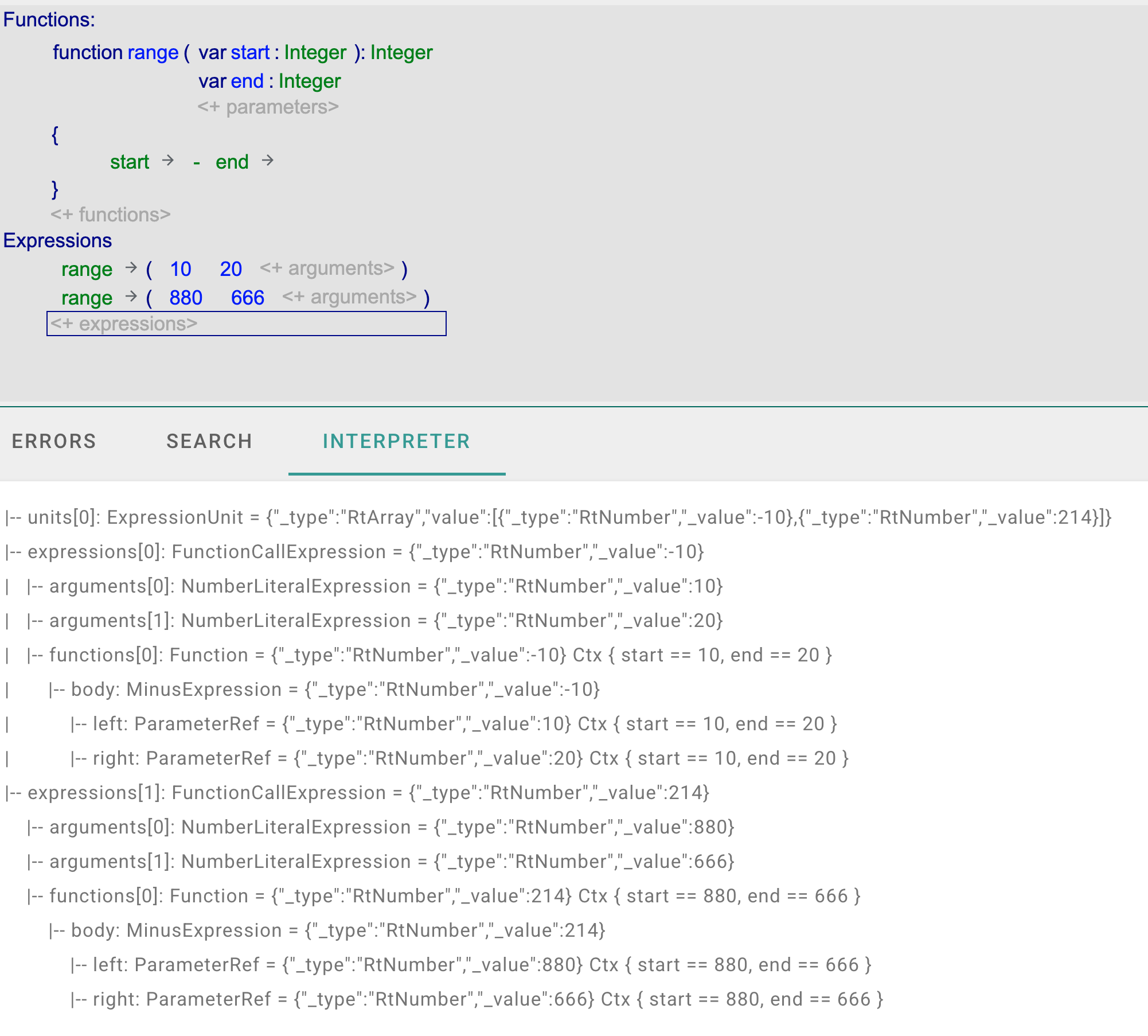

The following example shows the interpreter output for our Expressions language.

The model defines one function, range, and two expressions. When running the interpreter from the toolbar

in the Freon editor, a trace of the evaluation is shown in the Interpreter view.

In the trace you can find two Function evaluations. At the end of both lines

the context is shown with different values for the start and end parameters

for each function call.